Automated Design System Governance

Scaling governance without extra headcount.

Automate what doesn’t need a human touch.

TL;DR

Manual governance doesn't scale past a specific team size. Built POC automation tools to handle the repetitive stuff - consistency checks, intake validation, process guidance. Validated that AI could actually do this work before the team got restructured and left behind systems that run without human babysitting.

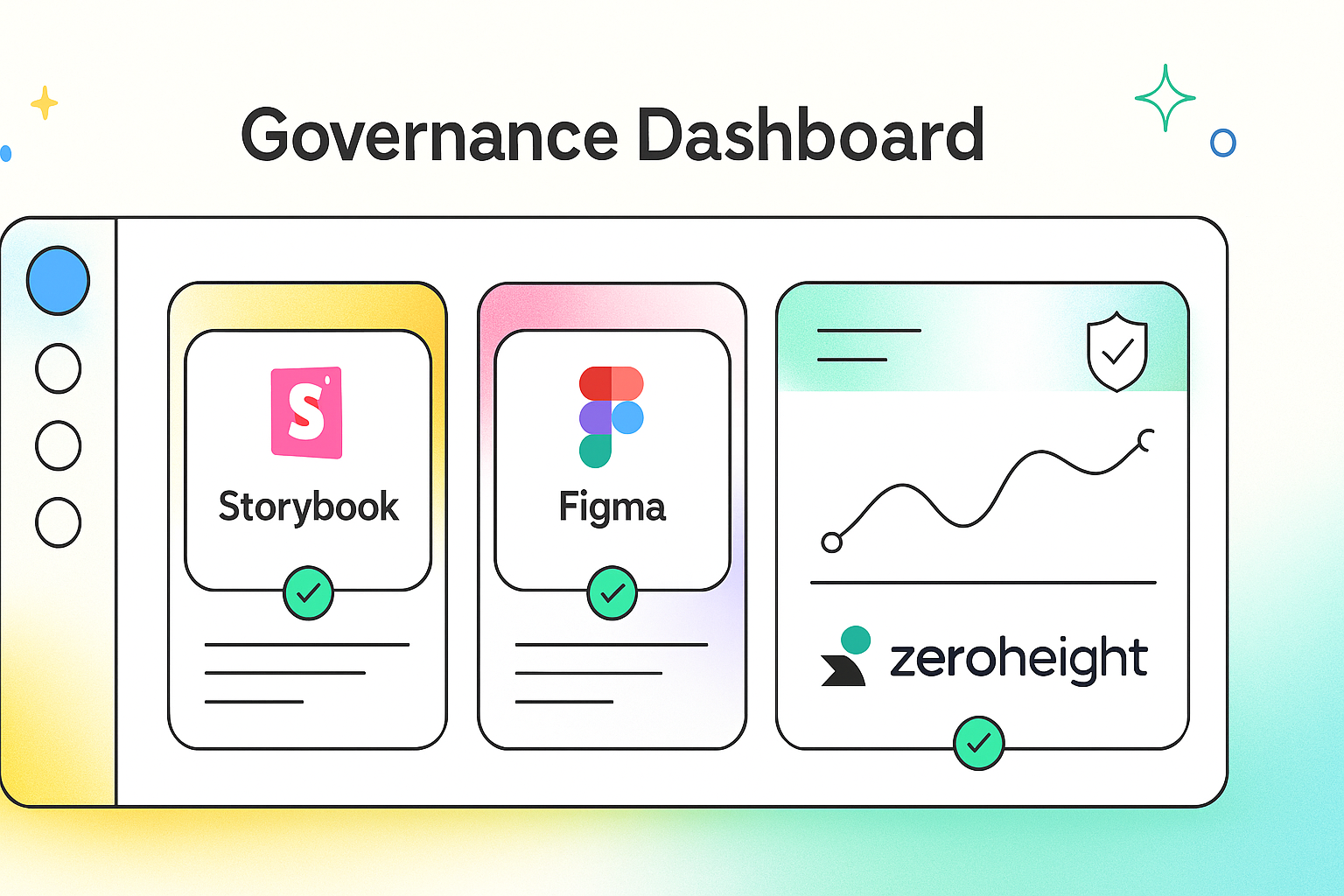

Hero Image: Dashboard showing automated governance across Storybook, Figma, and ZeroHeight

The Problem

At 35M+ users across multiple platforms, manual governance becomes a full-time job. Every consistency check, every incomplete Jira ticket, every "how do I do this process?" question eats team bandwidth. You end up being the service team instead of building the system.

The question: Can you maintain quality standards without humans doing the boring, repetitive checks?

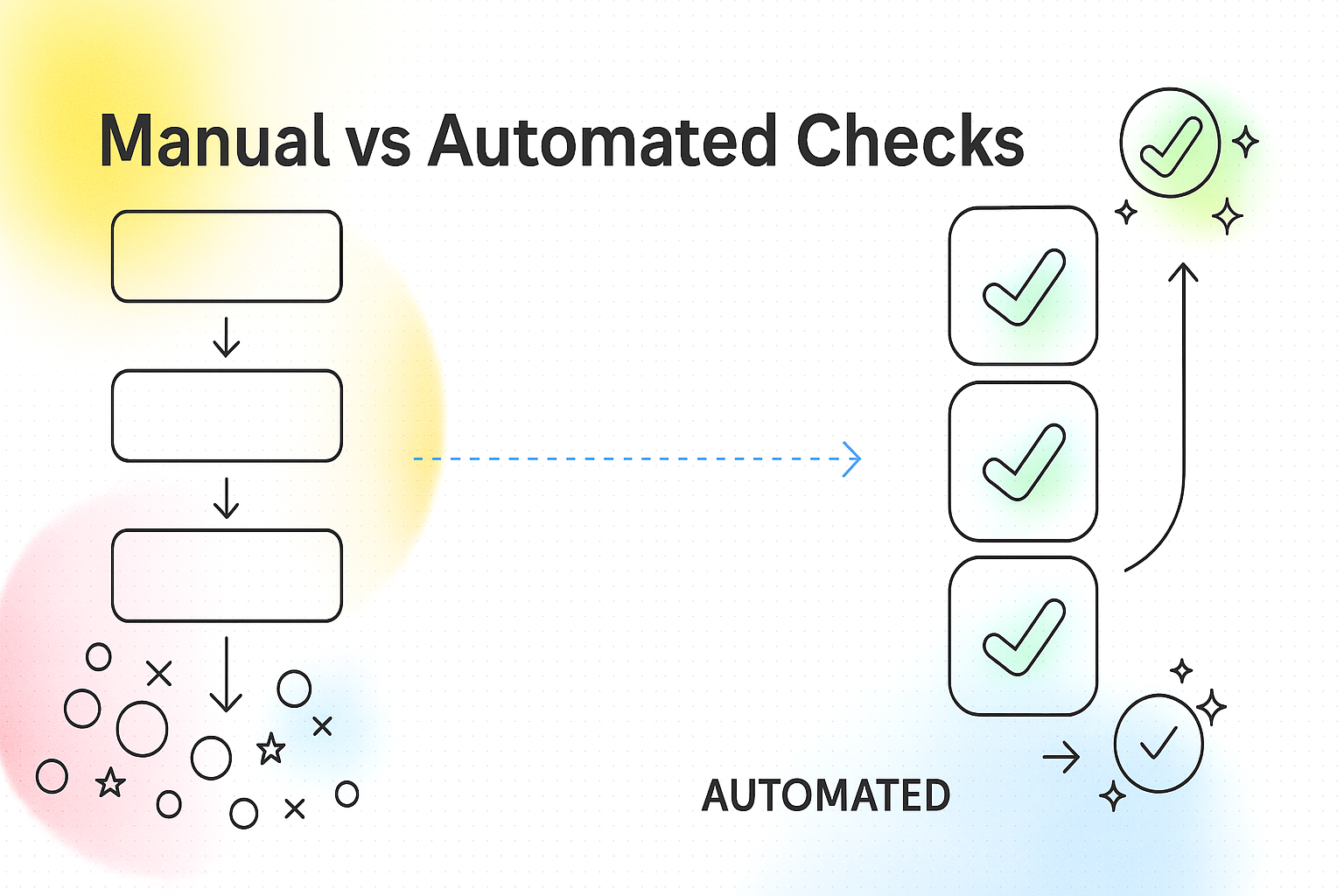

Process Diagram: Manual governance workflow showing bottlenecks vs automated checks

Approach

1. Map the repetitive stuff

Instead of randomly automating things, I catalogued what actually ate our time:

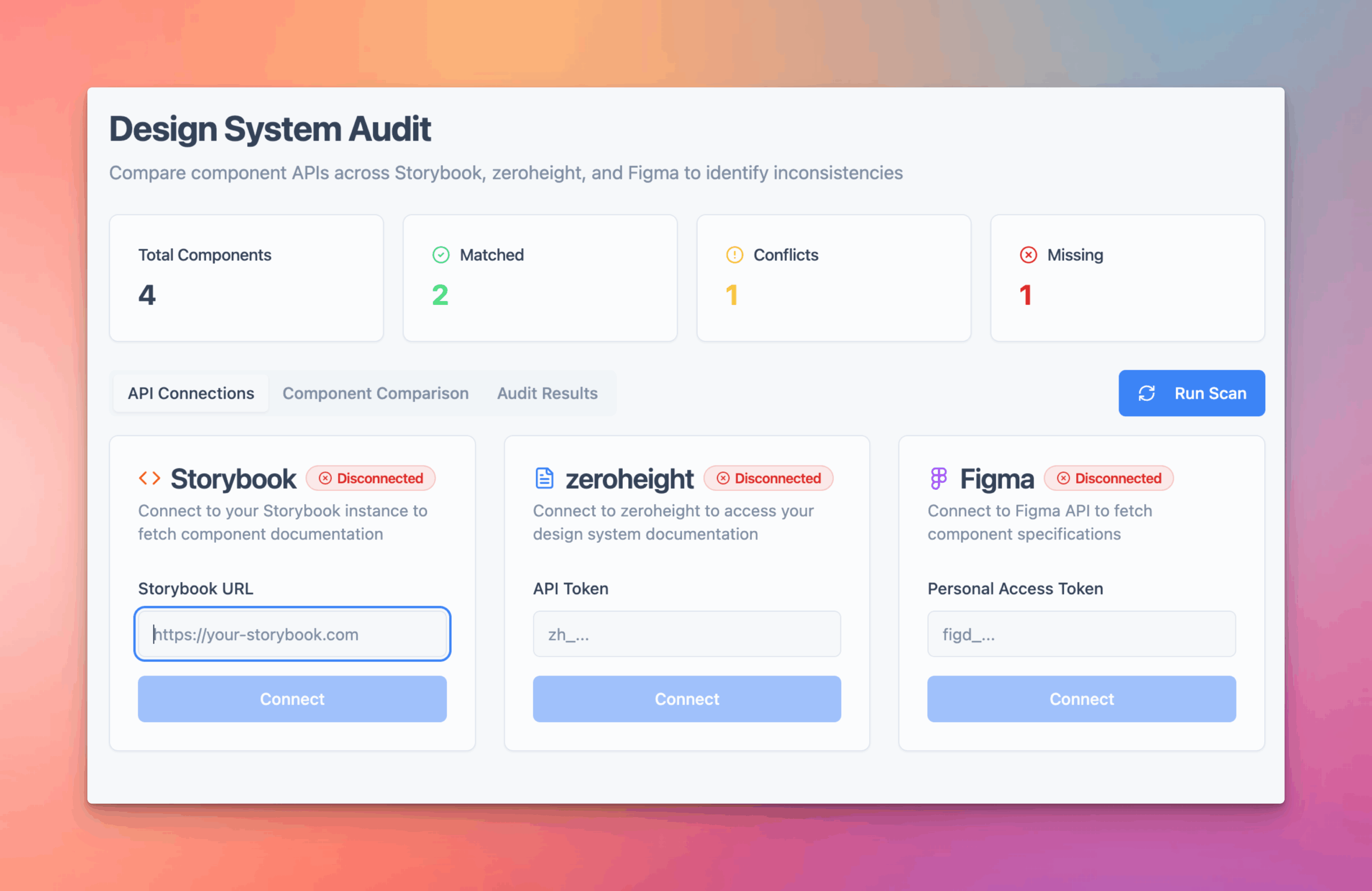

Checking consistency between Storybook, Figma, and ZeroHeight

Reviewing Jira tickets for basic compliance

Answering the same process questions repeatedly

Manually pulling metrics for leadership reports

Guessing at component adoption without real data

2. POC everything first

Built small experiments to see if automation actually worked before committing to anything big:

Tested if LLMs could spot real inconsistencies

Validated data pipeline feasibility

Confirmed ROI on time savings

What I Built

Process Diagram: Manual governance workflow showing bottlenecks vs automated checks

AI Experience Design Patterns

Problem

Half the tickets that come in are missing user stories, acceptance criteria, or basic context

What I built

AI that screens tickets against Agile@Alight standards before they enter the backlog

Result

Caught bad tickets at intake instead of discovering problems mid-sprint

Process Co-Pilot

Problem

Same process questions over and over - "how do I do X?" becomes a full-time support role

What I built

AI trained on our documentation that answers process questions instantly

Result

Team knowledge scaled without adding people to answer questions

Executive Reporting Automation

Problem

Leadership can't see design system value from technical metrics alone

What I built

Automated reports that translate technical metrics into business impact language

Result

Design system became strategic asset instead of mysterious cost center

Component Usage Tracker

Problem

Making roadmap decisions based on who talks loudest, not actual usage data

What I built

Chrome extension that tracks real component usage across applications

Result

Data-driven roadmap instead of opinion-driven feature requests

AI Experience Design Patterns

Problem

AI tools in enterprise products need consistent interaction patterns - can't have every team building different AI experiences

What I built

Design patterns and guidelines for how AI should appear in the user experience across all products

Result

Consistent AI integration across products instead of scattered, inconsistent implementations

Tool Screenshots: Collage showing interfaces of the 5 automation tools + Al design pattern examples

Results

Metrics Dashboard: Time savings, consistency scores, ticket quality improvements

Repeatable Process

Created a framework for finding automation opportunities instead of random tool-building

Technical Proof

Validated that LLMs can actually do design system governance work - not just theoretical

Systems that Survive

When the team got restructured, the automated systems kept running without human maintenance

What I Learned

Governance is a systems problem, not a people problem

Manual processes create dependencies. Automation removes bottlenecks while maintaining standards.

Test small, then scale

POCs prevent you from building expensive solutions to problems that don't actually exist.

Data beats opinions

Real usage data is more reliable than stakeholder assumptions for roadmap decisions.

The Bigger Picture

This wasn't just about building AI tools - it was about proving that design systems can operate as platforms instead of service teams. The automation work validated that you can maintain quality standards at enterprise scale without proportional headcount growth. When organizations hit the scaling wall, they usually add more people to do manual checks. This approach proves there's a different path - one where systems handle the repetitive work and humans focus on strategic decisions.

Credit

DESIGN

Russell Beaver

Rafael Flores

He loves collaborating,

so go on. Reach out.

CONTACT

FOUNDER WORK

FOLLOW

© 2026 Russell Beaver. All rights reserved.